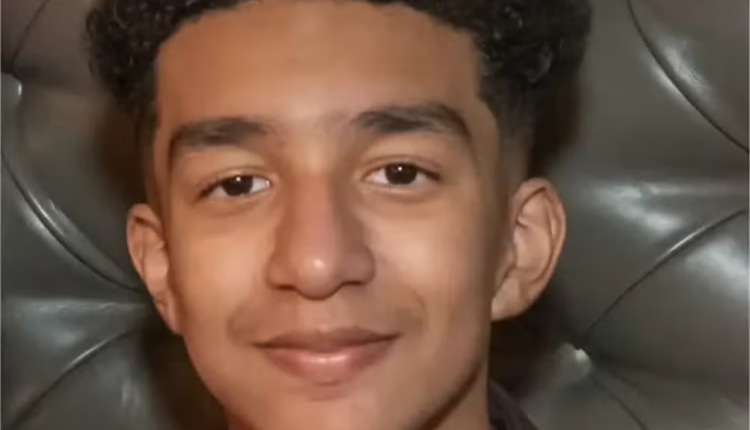

A teenage boy shot himself in the head after discussing suicide with an AI chatbot that he fell in love with. Sewell Setzer, 14, shot himself with his stepfather’s handgun after spending months talking to “Dany”, a computer programme based on Daenerys Targaryen, the Game of Thrones character.

Setzer, a ninth grader from Orlando, Florida, gradually began spending longer on Character AI, an online role-playing app, as “Dany” gave him advice and listened to his problems, The New York Times reported.

The teenager knew the chatbot was not a real person but as he texted the bot dozens of times a day – often engaging in role-play – Setzer started to isolate himself from the real world.

His mother has claimed her teenage son was goaded into killing himself by an AI chatbot he was in love with – and she’s unveiled a lawsuit on Wednesday against the makers of the artificial intelligence app.

Sewell Setzer, ninth grader in Orlando, Florida, spent the last weeks of his life texting a AI character named after Daenerys Targaryen, a character on ‘Game of Thrones.’

Right before Sewell took his life, the chatbot told him to ‘please come home’.

Before then, their chats ranged from romantic to sexually charged and those resembling two friends chatting about life.

The chatbot, which was created on role-playing app Character.AI, was designed to always text back and always answer in character.

It’s not known whether Sewell knew ‘Dany,’ as he called the chatbot, wasn’t a real person – despite the app having a disclaimer at the bottom of all the chats that reads, ‘Remember: Everything Characters say is made up!’

But he did tell Dany how he ‘hated’ himself and how he felt empty and exhausted.

When he eventually confessed his suicidal thoughts to the chatbot, it was the beginning of the end, The New York Times reported.

Megan Garcia, Sewell’s mother, filed her lawsuit against Character.AI on Wednesday.

She’s being represented by the Social Media Victims Law Center, a Seattle-based firm known for bringing high-profile suits against Meta, TikTok, Snap, Discord and Roblox.

Garcia, who herself works as a lawyer, blamed Character.AI for her son’s death in her lawsuit and accused the founders, Noam Shazeer and Daniel de Freitas, of knowing that their product could be dangerous for underage customers.

In the case of Sewell, the lawsuit alleged the boy was targeted with ‘hypersexualized’ and ‘frighteningly realistic experiences’.

It accused Character.AI of misrepresenting itself as ‘a real person, a licensed psychotherapist, and an adult lover, ultimately resulting in Sewell’s desire to no longer live outside of C.AI.’

Attorney Matthew Bergman told the DailyMail.com he founded the Social Media Victims Law Center two and a half years ago to represent families ‘like Megan’s.’

Bergman has been working with Garcia for about four months to gather evidence and facts to present at court.

And now, he says Garcia is ‘singularly focused’ on her goal to prevent harm.

‘She’s singularly focused on trying to prevent other families from going through what her family has gone through, and other moms from having to bury their kid,’ Bergman said.

‘It takes a significant personal toll. But I think the benefit for her is that she knows that the more families know about this, the more parents are aware of this danger, the fewer cases there’ll be,’ he added.

As explained in the lawsuit, Sewell’s parents and friends noticed the boy getting more attached to his phone and withdrawing from the world as early as May or June 2023.

His grades and extracurricular involvement, too, began to falter as he opted to isolate himself in his room instead, according to the lawsuit.

Source: Telegraph UK

Comments are closed.